My first solution is using dynamic programming. Then I want to also try breath-first-search. The first version of my BFS:

class Solution:

def coinChange(self, coins: List[int], amount: int) -> int:

if amount == 0:

return 0

# bfs

depth, ans = 1, -1

queue = [amount]

while len(queue) > 0:

new_queue = []

for node in queue:

for coin in coins:

if coin > node:

continue

if coin == node:

return depth

else:

new_queue.append(node-coin)

queue = new_queue

depth += 1

return ansIt could get the correct answer but met TLE (Time Limit Exceeded) error when running the below case:

[2,3,5,7,11,13,17,19,21] 9999

After checking the variables “queue” and “new_queue”, I noticed there are many duplicate values. Therefore I set them to “set” instead of “list”:

class Solution:

def coinChange(self, coins: List[int], amount: int) -> int:

if amount == 0:

return 0

# bfs

depth, ans = 1, -1

queue = {amount}

while len(queue) > 0:

new_queue = set()

for node in queue:

for coin in coins:

if coin > node:

continue

if coin == node:

return depth

else:

new_queue.add(node-coin)

queue = new_queue

depth += 1

return ansIt’s faster but still costs about 5 seconds (This is too long for a contest in LeetCode).

Actually, there are still many duplicated values. Not in one “queue”, but in different depths. Let me take this case:

Input: coins = [1,2,5], amount = 11

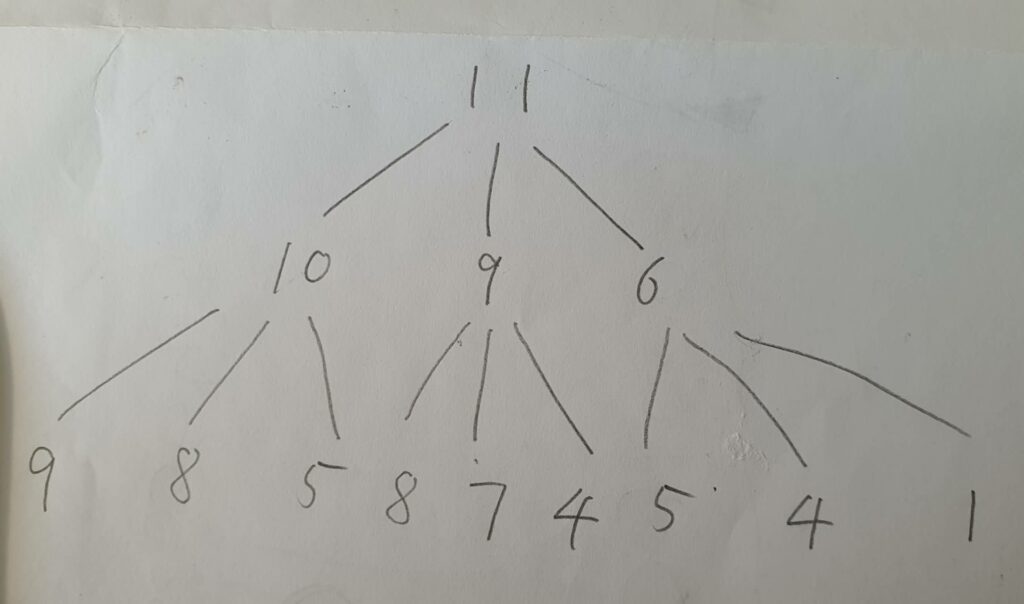

as an example. The BFS of it looks like this:

The program already starts to check “9” at the second layer, so it’s just a waste of time to check “9” again in the third layer. Thus, we can ignore any number in “new_queue” that is already in all the previous “queues”.

I will add a new set called “already” to record all the values the program ALREADY TO PROCESS, and minus it before searching the next depth. The new code only costs 90ms:

class Solution:

def coinChange(self, coins: List[int], amount: int) -> int:

if amount == 0:

return 0

# bfs

depth, ans = 1, -1

queue = {amount}

already = set()

while len(queue) > 0:

new_queue = set()

for node in queue:

for coin in coins:

if coin > node:

continue

if coin == node:

return depth

else:

new_queue.add(node-coin)

already |= queue

queue = new_queue - already

depth += 1

return ans———- 2023.01.24 ———-

Seems other BFS problems could also borrow this idea. Like this one I made https://leetcode.com/submissions/detail/884138668/